Artificial Intelligence Technology and Systems (AITS) Laboratory

Technical areas: Machine learning, AI, deep learning, hardware accelerators, hardware introspection, self-aware systems, secure and private ML, ML systems, sparse neural networks, secure ML accelerators.

Current Projects

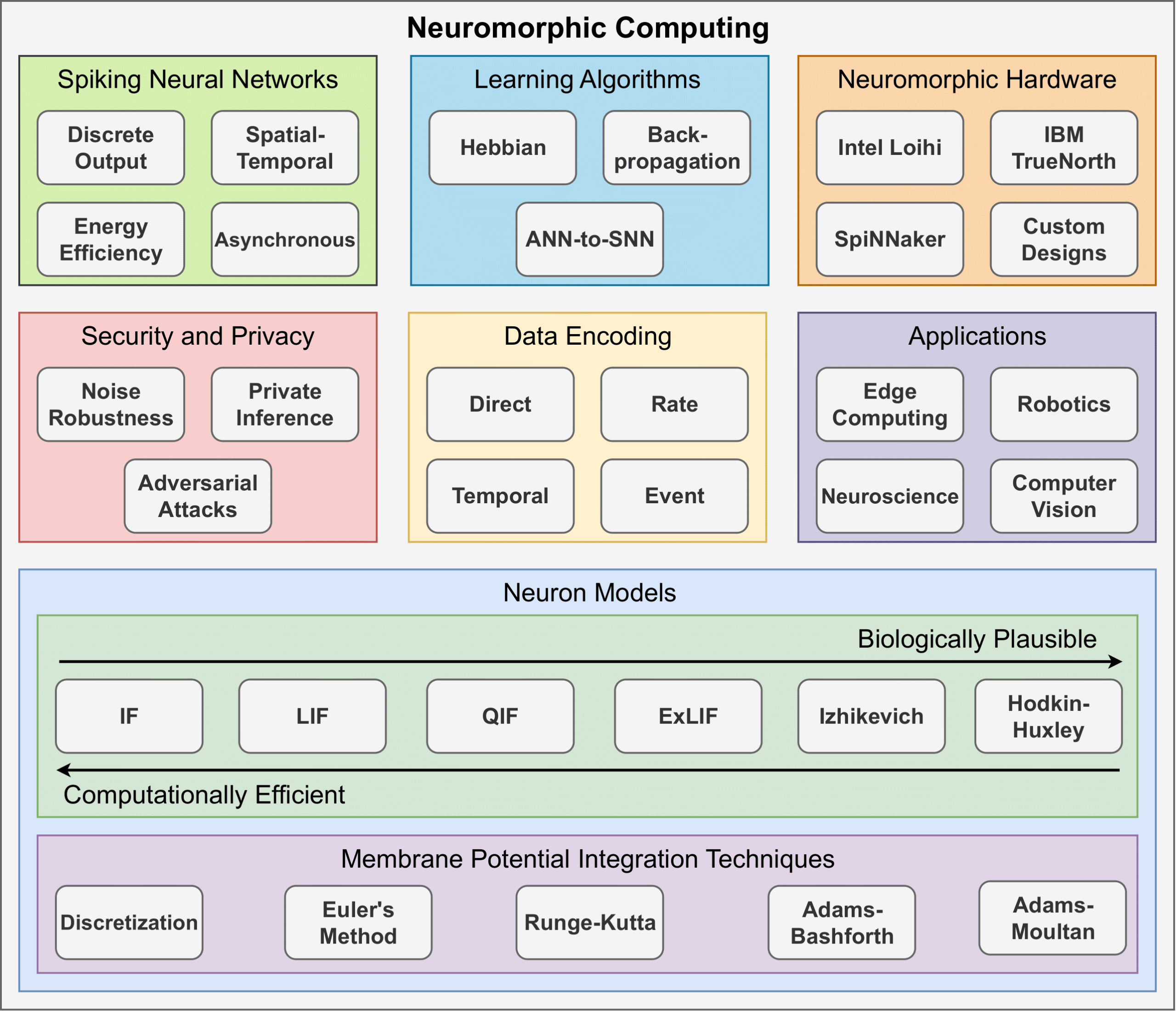

Neuromorphic Computing

Artificial Neural Networks (ANNs) have gained mainstream adoption in recent years, largely due to their success in diverse domains, including computer vision and natural language processing. However, the energy demands of ANNs continue to grow. Neuromorphic computing seeks to mitigate these energy demands by leveraging Spiking Neural Networks (SNNs). Unlike traditional ANNs, which synchronously process continuous-valued data, SNNs operate asynchronously on discrete events known as spikes. These spikes, driven by biologically inspired neuron dynamics, allow SNNs to replicate the brain’s sparse connectivity and energy-efficient structure. As a result, when SNNs are implemented on hardware tailored to these characteristics, they have the potential to operate with lower energy consumption than traditional ANN models.

Current efforts within our lab focus on several key areas, including algorithmic improvements to neuron models, exploring new computational models to drive neuron dynamics, designing neuromorphic architectures and accelerators, ensuring secure and private user training and deployment of SNNs, and more.

Privacy Preserving Machine Learning Development Framework

Deep learning has become a cornerstone of modern machine learning, powering a wide range of applications across various domains, including image and speech recognition, traffic prediction, and fraud detection. Despite its success, deep learning models often rely on vast amounts of data that may originate from multiple or highly sensitive sources. This dependence raises a critical challenge: how to preserve data privacy while still enabling collaborative and efficient machine learning.

This work presents a generalized open-source framework for secure and efficient deep learning training and inference using Homomorphic Encryption. This framework supports both Spiking Neural Networks and Convolutional Neural Networks, providing privacy-preserving computation without compromising model functionality. We developed optimized implementations of essential deep learning operations. Additionally, we proposed techniques for securely evaluating nonlinear functions through approximations, scheme switching, and polynomial activation models. Current efforts are focused on developing efficient techniques for training homomorphically encrypted models.

Fair, Robust, and Data Quality-Aware Federated Learning

As data becomes increasingly distributed across organizations, devices, and individuals, the ability to learn collaboratively without centralizing sensitive information has become both a technological and ethical imperative. Federated learning offers a pathway to harness collective intelligence while preserving privacy and data ownership. However, societal challenges such as unequal access to data, inconsistent data quality, and systemic bias threaten to exacerbate inequities in AI outcomes.

One particular effort focuses on medical institutions seeking to train models on MRI data without sharing patient information. However, differences in data quality, imaging protocols, and demographics can create bias and unreliable performance across institutions. We are exploring how fair and data quality-aware federated learning can mitigate these issues.

The team is developing algorithms, protocols and hardware solutions that allow designers to securely distribute their models without the risk of exfiltration

Trustworthy And Privacy-Preserving Machine Learning Systems

Companies, in their push to incorporate artificial intelligence – in particular, machine learning – into their Internet of Things (IoT), system-on-chip (SoC), and automotive applications, will have to address a number of design challenges related to the secure deployment of artificial intelligence learning models and techniques.

Securing Execution of Neural Network Models on Edge Devices

Neural network model deployment in the cloud may not be feasible or effective in many cases. If the application requires near instantaneous inference and cannot tolerate the round trip latency associated with calls to a remote cloud server, then edge computation is often the only viable solution. The models are often trained using private datasets that are very expensive to collect, or highly sensitive, using large amounts of computing power. They are commonly exposed either through online APIs, or used in hardware devices deployed in the field or given to the end users. This gives incentives to adversaries to attempt to steal these ML models as a proxy for gathering datasets.

Publications

- E. Jahns, D. Moreno, and M. A. Kinsy, ‘Learning Neuron Dynamics within Deep Spiking Neural Networks’, arXiv [cs.NE]. 2025.

- E. Jahns, D. Moreno, M. Stojkov, and M. A. Kinsy, ‘Discretized Quadratic Integrate-and-Fire Neuron Model for Deep Spiking Neural Networks’, arXiv [cs.LG]. 2025.

- N. B. Njungle, E. Jahns, and M. A. Kinsy, ‘FHEON: A Configurable Framework for Developing Privacy-Preserving Neural Networks Using Homomorphic Encryption’, arXiv [cs.CR]. 2025.

- N. B. Njungle, E. Jahns, M. Stojkov, and M. A. Kinsy, ‘PrivSpike: Employing Homomorphic Encryption for Private Inference of Deep Spiking Neural Networks’, arXiv [cs.CR]. 2025.

- M. Isakov, M. Currier, E. del Rosario, S. Madireddy, P. Balaprakash, P. H. Carns, R. Ross, G. K. Lockwood, and M. A. Kinsy: “A Taxonomy of Error Sources in HPC I/O Machine Learning Models”, In the International Conference for High Performance Computing, Networking, Storage, and Analysis (SC), 2022.

- G. Dessouky, M. Isakov, M. A. Kinsy, P. Mahmoody, Miguel Mark, A. Sadeghi, E. Stapf, and S. Zeitouni: “Distributed Memory Guard: Enabling Secure Enclave Computing in NoC-based Architectures”, In the 58th ACM/EDAC/IEEE Design Automation Conference (DAC), 2021.

Technical Lead