Research Projects

The two forces driving the need and innovation in the field of secure and trusted microelectronics, or simply hardware security, are the evolving nature of user-applications with greater security and privacy and edge computation, and the introduction of new devices that can be leverage to reinforce the security posture of microelectronics and to provide better security anchor to the whole computing infrastructure – hardware, firmware, and software. The center laboratories and research activities are organized to integrate these two forces.

Current Projects

Using Security-Aware Reconfigurable Interposers: A new active interposer 2.5-D design methodology using reconfigurable logic targeting security applications. We imbue the active interposer with reconfigurable fabric that can be programmed at integration and end-use stages to provide hardware root-of-trust security guarantees.

Zero-Trust SoC Design: Building Secure System-on-Chip Designs from Untrusted Components

On SoC platforms consisting of multiple or a multitude of processing elements, the runtime interactions between processing elements can be very complex and difficult to fully analyze at design time.

The key innovation of the approach is that security is provided through (i) hardware virtualization that is completely independent of the processing elements themselves, and (ii) runtime establishment of trust among the elements.

The approach aims to reduce the system’s attack surface by creating a virtualization layer that isolates compute threads based on system and user-defined trust levels

This project contributes to the protection of electronics systems against Side Channel and Malicious Hardware attack classes.

BGAS/Zeno: Capability-Aware System Design Prototype

Despite the efforts for numerous security researchers, memory safety vulnerabilities continue to plague modern computing systems

Capability-based systems attempt to solve this safety violation by validating access permissions in hardware. However, the high performance cost associated remains a hindrance to adoption in existing capability-based systems

Under this research effort, the team introduces a new way of building memory-safe systems that can fulfil modern high-performance compute requirements.

The system includes elements from all stages of the compute stack that enables development of memory-safe applications including processors, compiler, operating system, and runtime support libraries.

The system includes the following subprojects: Zeno, CORDON, RAIL, Panoptes, and SecProbe.

Hardware-assisted secure HPC/data-center scale shared memory: Zeno is a new security-aware scalable architecture for shared, distributed memory, high performance computing (HPC) systems. Its micro-architectural support for shared memory enables security-aware data sharing between different processors in a single HPC system without hindering scalability.

The Zeno architecture specifications, synthesized design, system support software, and programming tools will be open-sourced.

Zeno Architecture: A Secure High-Performance Computing RISC-V Processor Design

The traditional evolution of the HPC field has created an ecosystem where oftentimes a small number of systems would be commissioned by a government agency through a computer system vendor using (i) proprietary intellectual property (IP) blocks and (ii) obscure or rigid system architecture.

One can argue that the lack of open-architecture concepts in HPC and server-class systems has severely hindered innovation in the field. System-level security-related research efforts are generally altogether abandoned due to the lack of visibility or access to micro-architecture details to test, analyze or validate vulnerabilities, threats or risks.

Under this research effort, the team has introduced a new open-source server architecture, called Zeno, where high-performance and security are both first class system characteristics.

This project contributes to security and scalability of next-generation high-performance computing systems.

FHEON: A Configurable Framework for Developing Privacy-Preserving Neural Networks Using Homomorphic Encryption

Privacy-preserving machine learning seeks to protect the confidentiality of both data and models, ensuring that sensitive information remains secure throughout training and inference. Although Homomorphic Encryption is regarded as one of the most promising approaches for this purpose, there is still a lack of configurable frameworks that enable the development of privacy-preserving applications comparable to those available in conventional machine learning.

Researchers at the Lab are developing FHEON, an open-source framework designed to enable privacy-preserving neural network training and inference using Homomorphic Encryption. The research focuses on designing and implementing new and more efficient FHE-friendly algorithms for fundamental machine learning primitives. FHEON provides a suite of encrypted computation efficient primitives, including support for Encrypted Convolutional Neural Networks, Spiking Neural Networks, High-Throughput Batched Neural Networks, and privacy-preserving Training mechanisms.

Low-power and advanced encryptions

- Quantum-Proof Lightweight McEliece Cryptosystem Co-processor Design

- Fast Arithmetic Hardware Library For RLWE-Based Homomorphic Encryption

- A Post-Quantum Secure Discrete Gaussian Noise Sampler

- Open-Source FPGA Implementation of Post-Quantum Cryptographic Hardware Primitives

Homomorphic-Encryption Enabled RISC-V (HERISCV) Architecture

Large Arithmetic Word Size (LAWS): Word size directly relates to the signal-to-noise ratio (SNR) of how a ciphertext is stored and manipulated in computation. There currently is no hardware architecture that natively supports the register sizes and/or the execution units performing the fundamental mathematical and logical operations for lattice FHE schemes.

ISA Based Reconfigurable Architecture: Given the evolving state of improvements and new schemes in the field of FHE, the HERISCV architecture aims to provide an array of operations and functionality instead of optimizing the hardware for one particular algorithm. The architecture provides significant performance gains for lattice-based FHE applications as well as the flexibility to experiment with new designs by having instruction support for the core operations, e.g., lattices, polynomials, arithmetic, logic and finite fields.

This project advances our ability to perform secure and privacy-preserving computation at scale.

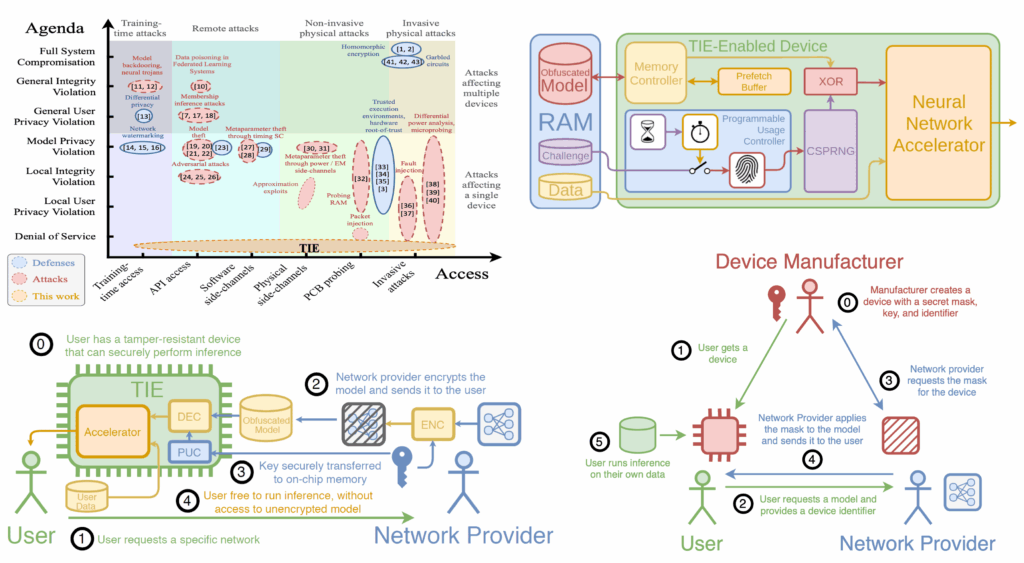

The team is developing algorithms, protocols and hardware solutions that allow designers to securely distribute their models without the risk of exfiltration. Our Trusted Inference Engine (TIE) architecture protects non-volatile memory against probing attacks and prevents API-based extraction by ensuring rate-limiting operations. Using our cryptographically secure anonymous authentication protocol, it fulfills the desired functionality for authentication and privacy while providing strong security guarantees for edge deployments.

Trustworthy And Privacy-Preserving Machine Learning Systems

Companies, in their push to incorporate artificial intelligence – in particular, machine learning – into their Internet of Things (IoT), system-on-chip (SoC), and automotive applications, will have to address a number of design challenges related to the secure deployment of artificial intelligence learning models and techniques.

Securing Execution of Neural Network Models on Edge Devices

Neural network model deployment in the cloud may not be feasible or effective in many cases. If the application requires near instantaneous inference and cannot tolerate the round trip latency associated with calls to a remote cloud server, then edge computation is often the only viable solution. The models are often trained using private datasets that are very expensive to collect, or highly sensitive, using large amounts of computing power. They are commonly exposed either through online APIs, or used in hardware devices deployed in the field or given to the end users. This gives incentives to adversaries to attempt to steal these ML models as a proxy for gathering datasets.

This project advances our ability to securely deploy machine learning applications with privacy-preserving guarantees.

AtoNet represents a step toward self-organizing, intelligent IoT ecosystems, where trust, adaptability, and topology awareness merge to ensure continuous operation, security, and autonomy in large decentralized infrastructures.

AtoNet – Adaptive Topology and Self-Organizing Networks for Decentralized IoT Systems

AtoNet explores how large-scale IoT and edge systems can autonomously reconfigure their topology and adapt roles dynamically in response to changing conditions such as failures, congestion, or malicious behavior. The project aims to create fully decentralized, trust-driven networks capable of maintaining performance, resilience, and coordination without centralized control.

Our ongoing research on AtoNet investigates:

- Adaptive topology evolution, where the network transitions across multiple structures (star, mesh, tree, cluster, hybrid) according to real-time conditions such as latency, load, and trust.

- Decentralized role management, allowing nodes to be promoted, demoted, or reassigned autonomously based on behavioral trust and capability scores.

- Behavioral validation and fault differentiation, distinguishing benign resource faults (e.g., low battery, disconnection) from malicious anomalies through challenge–response analysis.

- Resilient recovery and continuity mechanisms, where nodes elect new leaders and rebuild clusters locally after failures without global synchronization.

- Scalable adaptation across heterogeneous devices, bridging IoT sensors, edge nodes, and embedded platforms under dynamic and constrained environments.

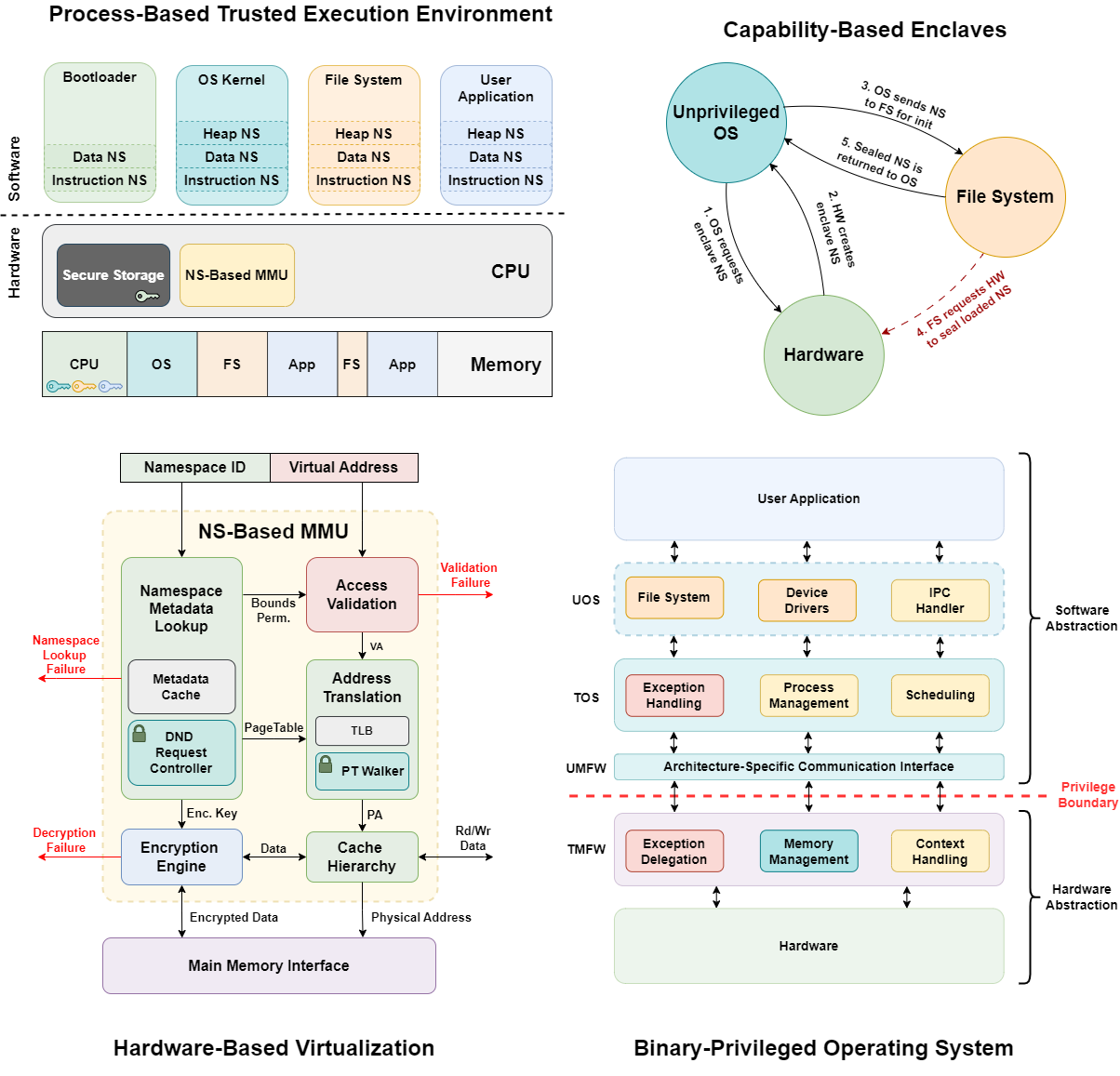

CORDON: Capability-Aware Microkernel and Trusted Execution Environment

Current trusted execution environments (TEEs) protect applications from privileged software but remain limited by rigid abstractions and kernel-mediated trust. VM-based designs offer coarse isolation, while enclave-based systems rely on static memory partitioning and kernel coordination, restricting scalability and fine-grained sharing.

CORDON rethinks this model by introducing process-level isolation built entirely on hardware-managed capabilities. Each process executes within its own sealed Namespace, a hardware-validated domain that enforces permissions, bounds, and confidentiality independently of the operating system. This eliminates the need for kernel cooperation in enforcing isolation or mediating communication.

CORDON’s capability contracts provide a runtime mechanism for secure delegation, revocation, and cross-process function calls, enabling fine-grained collaboration among mutually distrusting components. The design combines a minimal trusted computing base with a microkernel-style software stack that supports distributed operation without relying on privileged monitors.

CORDON serves as a foundation for scalable, capability-oriented TEEs that unify process isolation and secure delegation under a common hardware abstraction.

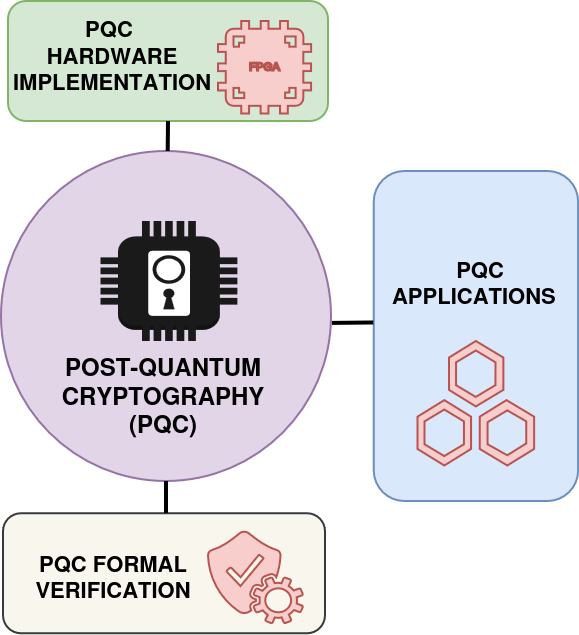

Post-Quantum Cryptographic Acceleration, Formal Verification, and Applications

At the Lab, we are focused on advancing the security and efficiency of Post-Quantum Cryptography (PQC) through the support of accelerated PQC, formal verification, and practical application development. We design and provide hardware modules for leading PQC families, including lattice-based, isogeny-based, and hash-based cryptography. Our work adhere closely to the NIST standardization guidelines, ensuring that PQC solutions are applicable, usable, and deployable across a wide range of applications and hardware platforms.

Our research team is also dedicated to the secure implementation of PQC algorithms. This work includes the formalization of NIST standardized schemes to guarantee both functional correctness and side-channel resistance. Beyond implementation, we actively explore algorithmic innovations and extensions of PQC primitives for emerging domains. A key example is our efforts to integrate PQC into low-power, connected IoT and distributed systems, where performance and security must coexist under strict resource constraints.

FAKAZI: Formal Infrastructure for the Development and Verification of Secure and Trust Critical Systems

Formal verification serves as a fundamental pillar for ensuring the reliability and security of computing systems. This work presents Fakazi, a novel infrastructure designed to address the challenges hindering the widespread adoption of formal verification. Fakazi offers a versatile FV engine and user-friendly environment tailored for verifying critical systems with main focus on security vulnerabilities.

Fakazi contain two main components: Static Analyzer and Dynamic Analyzer. The static analyzer leverages automated theorem provers to reason about program correctness at the source level, while the dynamic analyzer employs dynamic binary analysis. It employs a Fuzzying guided engine depending on Symbolic Execution and SMT solvers to verify runtime behavior and uncover potential security vulnerabilities such as memory and constant-time violations.

This infrastructure is also developed to abstract the complexity of formal verification from the development of critical system. Our goal is to ensure provable correctness, trust, security, and robustness from algorithm design to implementation. This infrastructure should automatically supports formally verified primitives for PQC, FHE, and ZKP systems, while enhancing automation and scalability within the verification pipeline.

eTAVA: Trustworthy and Assurance Validation and Analysis of security ICs

To perform counterfeit chips analysis, to mitigate chip failure in mission-critical applications – defibrillators, pacemakers, and automotive and to assess the impact of counterfeit chips in medical devices and military applications, the team has introduced eTAVA (Emulation-based Trustworthy and Assurance Validation and Analysis) Tool is a software/hardware co-design platform for FPGA-based acceleration of trustworthy and assurance estimation and validation of IC designs.

eTAVA can be used in two modalities (1) to estimate and optimize the trustworthy and assurance properties of an ASIC design before manufacturing through simulation and analysis, and (2) to perform post-fabrication validation of these properties in a hardware-in-the-loop setting – the hardware here being an FPGA emulated version of the design.

This project advances methods and technologies for designing trustworthy and zero-trust secure electronics.

AQUILA represents a research infrastructure for the future of distributed systems, where heterogeneous devices operate under a shared orchestration layer to enable real-world experimentation, performance analysis, and validation of novel algorithms for reliability, security, and energy efficiency across the cloud–edge continuum.

AQUILA: A Flexible Architecture Guideline for Building Custom Distributed Systems Testbeds

The AQUILA project investigates how to build, interconnect, and orchestrate heterogeneous computing devices – from FPGAs to IoT nodes – into unified, scalable testbeds for distributed and edge systems research. Its goal is to make experimental infrastructures flexible, modular, and accessible, bridging the gap between large industrial platforms and purely simulated environments.

Building upon the AQUILA framework, our ongoing research focuses on:

- Cross-domain orchestration, enabling seamless task distribution and coordination across cloud, hybrid, and edge environments.

- Dynamic resource management, where devices with different capabilities (CPUs, FPGAs, microcontrollers) collaborate autonomously under a lightweight task manager.

- Heterogeneous communication protocols, combining UDP, MQTT, and serial bridges to integrate both IP-capable and resource-constrained nodes in a unified network.

- Hardware-software co-design, using real devices to emulate realistic network and compute behaviors that are not captured in simulators.

- Scalable experimentation methodology, allowing researchers to reproduce distributed workloads, failure scenarios, and adaptive control strategies in cost-effective setups.

R-Visor provides a modular, highly extensible platform with a user-friendly instrumentation API, supporting diverse instrumentation capabilities.

R-Visor: Dynamic Binary Instrumentation and Analysis Framework for Open Instruction Set Architectures

Binary Instrumentation (BI) is a technique that involves altering an application binary to include new instructions termed instrumentation code. This has a wide range of utility such as emulation of new instructions, profile collection for binary optimization, malware detection and binary translation from one architecture to another.

Despite BI being a mature field, its advancements have been targeted at closed ISAs such as X86 and ARM, although there has been a recent surge of interest in open ISAs such as RISC-V due to their extensibility feature. However, traditional instrumentation tools, built for closed ISAs, lack the adaptability to easily support new extensions. Therefore, a key requirement for modern instrumentation tools is easy extensibility.

R-Visor is an extensible modular dynamic binary instrumentation (DBI) tool designed for open Instruction Set Architecture (ISA) ecosystems, facilitating architectural research and development. R-Visor addresses the extensibility challenge by leveraging the ArchVisor DSL, which allows users to readily incorporate new ISA extensions. R-Visor is open source, while providing initial support for the RISC-V ISA.

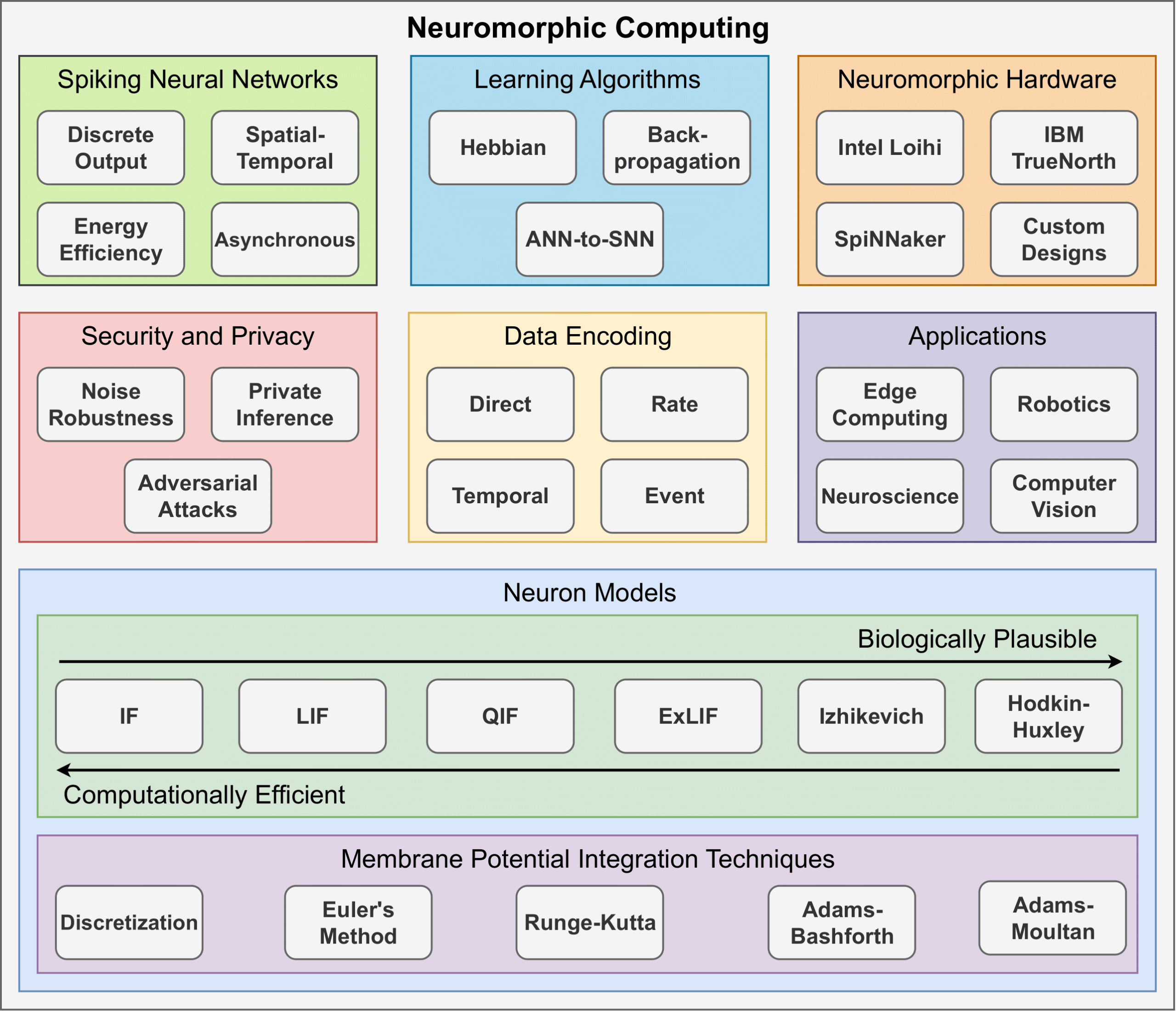

Neuromorphic Computing

Artificial Neural Networks (ANNs) have gained mainstream adoption in recent years, largely due to their success in diverse domains, including computer vision and natural language processing. However, the energy demands of ANNs continue to grow. Neuromorphic computing seeks to mitigate these energy demands by leveraging Spiking Neural Networks (SNNs). Unlike traditional ANNs, which synchronously process continuous-valued data, SNNs operate asynchronously on discrete events known as spikes. These spikes, driven by biologically inspired neuron dynamics, allow SNNs to replicate the brain’s sparse connectivity and energy-efficient structure. As a result, when SNNs are implemented on hardware tailored to these characteristics, they have the potential to operate with lower energy consumption than traditional ANN models.

Current efforts within our lab focus on several key areas, including algorithmic improvements to neuron models, exploring new computational models to drive neuron dynamics, designing neuromorphic architectures and accelerators, ensuring secure and private user training and deployment of SNNs, and more.

Fair, Robust, and Data Quality-Aware Federated Learning

As data becomes increasingly distributed across organizations, devices, and individuals, the ability to learn collaboratively without centralizing sensitive information has become both a technological and ethical imperative. Federated learning offers a pathway to harness collective intelligence while preserving privacy and data ownership. However, societal challenges such as unequal access to data, inconsistent data quality, and systemic bias threaten to exacerbate inequities in AI outcomes.

One particular effort focuses on medical institutions seeking to train models on MRI data without sharing patient information. However, differences in data quality, imaging protocols, and demographics can create bias and unreliable performance across institutions. We are exploring how fair and data quality-aware federated learning can mitigate these issues.

Ongoing Tape-Out Efforts

Translational Research Through Full-System Design

The center investigates, designs and prototypes secure full-stack computer systems using hardware-as-root-of-trust techniques and applied cryptography.

We apply an integrative approach consisting of algorithmic optimization, design flow automation, hardware-firmware co-development and prototyping.

We examine and design for (i) the security of the processing elements – data in transformation, (ii) the security of the communication among the processing elements – data in motion, and (iii) the security of the data storage and sharing – data at rest.